Gated open-source models: treat your model catalog like a control plane

When ‘use this model’ becomes an approval flow, your model catalog stops being a list—and becomes the control plane for licensing, risk, evaluation, and cost guardrails.

KMS ITC

Enterprises don’t usually get stuck on which model is best.

They get stuck on how model access is controlled once open-source models enter the building:

- Who is allowed to deploy which model—and why?

- What licenses are acceptable?

- How do you prevent “shadow models” running without safety and evaluation?

- How do you observe usage and cost across teams?

Microsoft’s work to make Hugging Face gated models accessible inside Azure AI Foundry is interesting because it pushes “model choice” into something more architecturally useful: a governed access + deployment flow.

1) What changed (and why it matters)

“Hugging Face gated models” are models that require an explicit access step (approval/acceptance) rather than being anonymously consumable.

When that gating experience is pulled into an enterprise platform surface (like Foundry), you get a practical shift:

- Model access becomes a workflow (not a developer side quest)

- Approvals become auditable (who approved what, when, and for which purpose)

- Deployment gets standardised (fewer one-off, unpatched, unobserved endpoints)

This matters because open-source models are increasingly strategic—but they’re also supply chain.

2) The architectural implication: your model catalog is now a control plane

Treat your model catalog like you treat:

- an API gateway (policy + identity + observability)

- a container registry (provenance + scanning + version pinning)

- a data catalog (classification + stewardship + access controls)

In other words: the catalog shouldn’t just answer “what models exist?”.

It should enforce “what must be true before this model can be used in production?”.

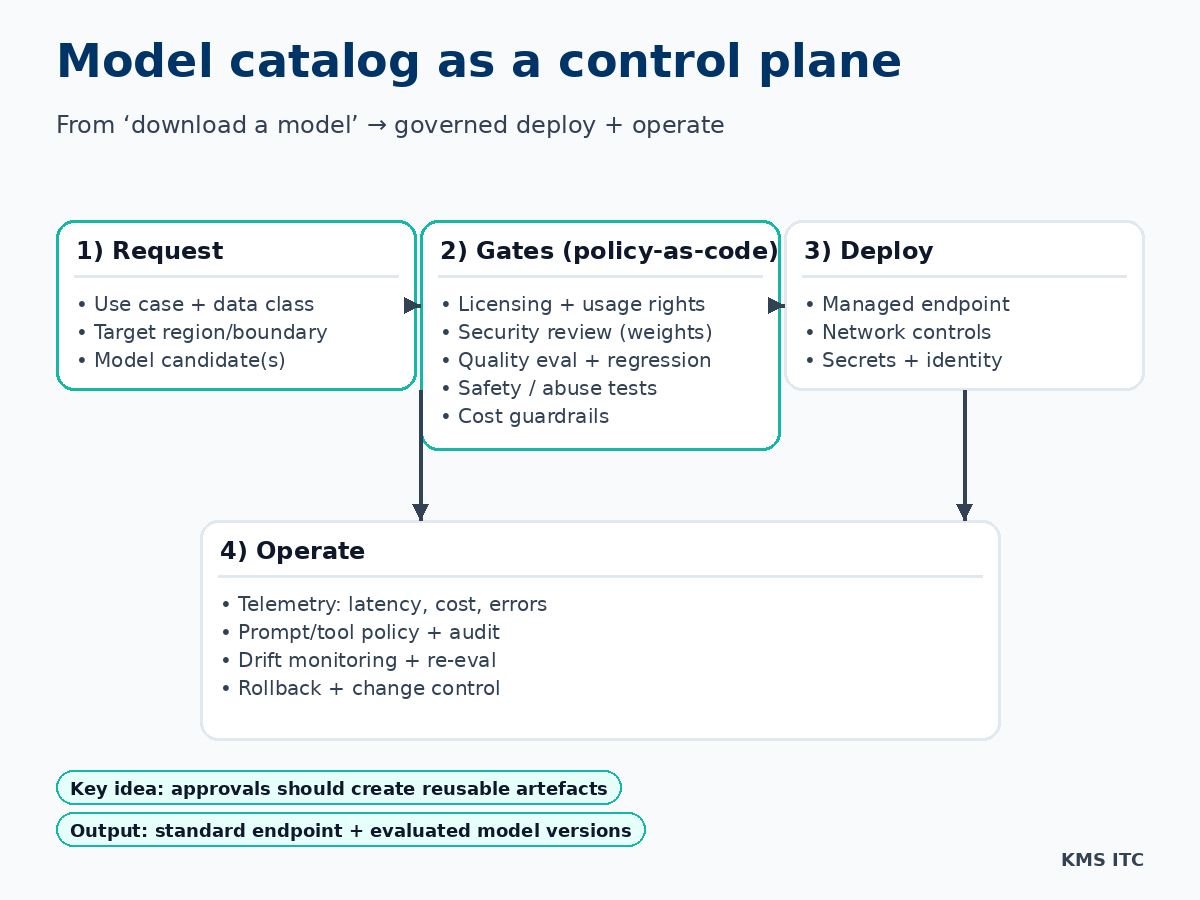

3) A reference pattern: model catalog as a control plane

If you want a CTO/architect-grade pattern, make model onboarding produce reusable artefacts:

- a model risk record (license, intended use, known limitations)

- an evaluation pack (offline eval set + regression tests + acceptance criteria)

- a deployment template (network boundary, identity, logging)

- a cost envelope (rate limits, budgets, caching strategy)

The goal isn’t “more governance”. The goal is repeatable, automatable decisions.

4) Tradeoffs you should force into the open

Gating and catalog workflows don’t remove tradeoffs—they expose them.

Here are the ones worth making explicit:

- Speed vs safety: How fast can a team get from “I want to try a model” to a governed sandbox? What’s the path to production?

- Central control vs product autonomy: Which decisions are platform-level defaults, and which are per-product exceptions?

- Vendor surface vs portability: Are you standardising on a cloud-native catalog and endpoints, or building a model gateway you can move?

- Cost vs capability: Are you funding higher-end models centrally, or pushing cost ownership to product P&Ls?

5) What to implement (pragmatic next steps)

If you’re scaling GenAI across multiple teams, a good 30–60 day plan looks like:

- Define model tiers (sandbox / internal / customer-facing / regulated)

- Create an onboarding checklist for each tier (license, evals, safety, ops)

- Standardise deployment (one paved-road endpoint pattern)

- Add gates that run automatically (eval + safety + cost checks in CI)

- Make usage observable (who used what model, where, and what it cost)

The “win condition”: model choice becomes a fast, governed decision—not a months-long exception process.

6) What to do next

If you’re trying to operationalise open-source models safely, start by designing your model catalog control plane:

- policy-as-code gates

- evaluation-as-a-release-criterion

- standard endpoints with strong identity + logging

If you want, I can help you:

- define a model tiering scheme + onboarding workflow

- set up an evaluation harness (offline + online)

- design a portable model gateway/control plane pattern

Reach out via /contact and tell me: (1) your most important GenAI use case, and (2) your strongest constraint (risk, cost, latency, or data boundary).