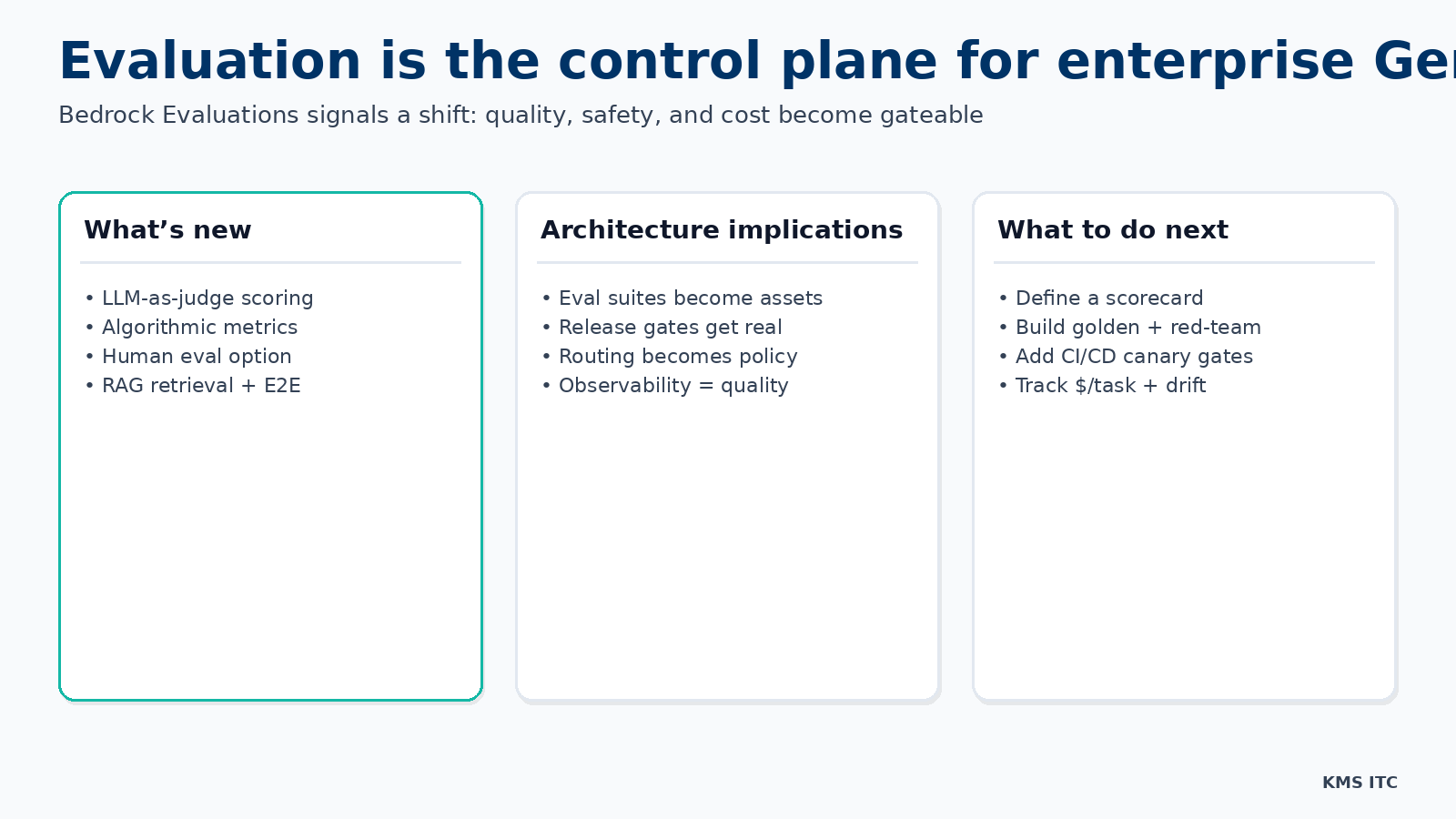

Evaluation is the control plane for enterprise GenAI: what Bedrock Evaluations implies for architecture and operating model

When evaluation becomes a managed capability (LLM-as-judge, algorithmic metrics, human review, RAG scoring), you can finally gate GenAI changes like software releases. Here’s the reference architecture and tradeoffs.

KMS ITC

Most enterprise GenAI programs hit the same wall:

- The demo works.

- The pilot works most of the time.

- Then a small prompt/model/RAG tweak ships… and trust collapses.

Traditional software has a control plane: CI, tests, release gates, canaries, rollbacks.

GenAI needs the same thing, but the “tests” are fuzzier: quality, hallucination risk, safety constraints, latency, and cost-per-task.

AWS’ Bedrock Evaluations is a signal worth paying attention to: evaluation is moving from “spreadsheet + vibes” to a platform primitive you can standardise, automate, and govern.

1) The capability jump (what matters, not the feature list)

Bedrock Evaluations packages a set of evaluation modes that map cleanly to enterprise needs:

- LLM-as-judge scoring for correctness/completeness/harmfulness

- Algorithmic NLP metrics (e.g., exact match-style and similarity-style measures)

- Human evaluation workflows when you need calibrated judgement

- RAG-specific scoring (retrieval quality and end-to-end response quality)

That mix matters because enterprises don’t have “one kind” of risk.

Some outputs can be validated mechanically. Some require a judge model. Some require a human.

If you can’t combine these consistently, you can’t operate GenAI at scale.

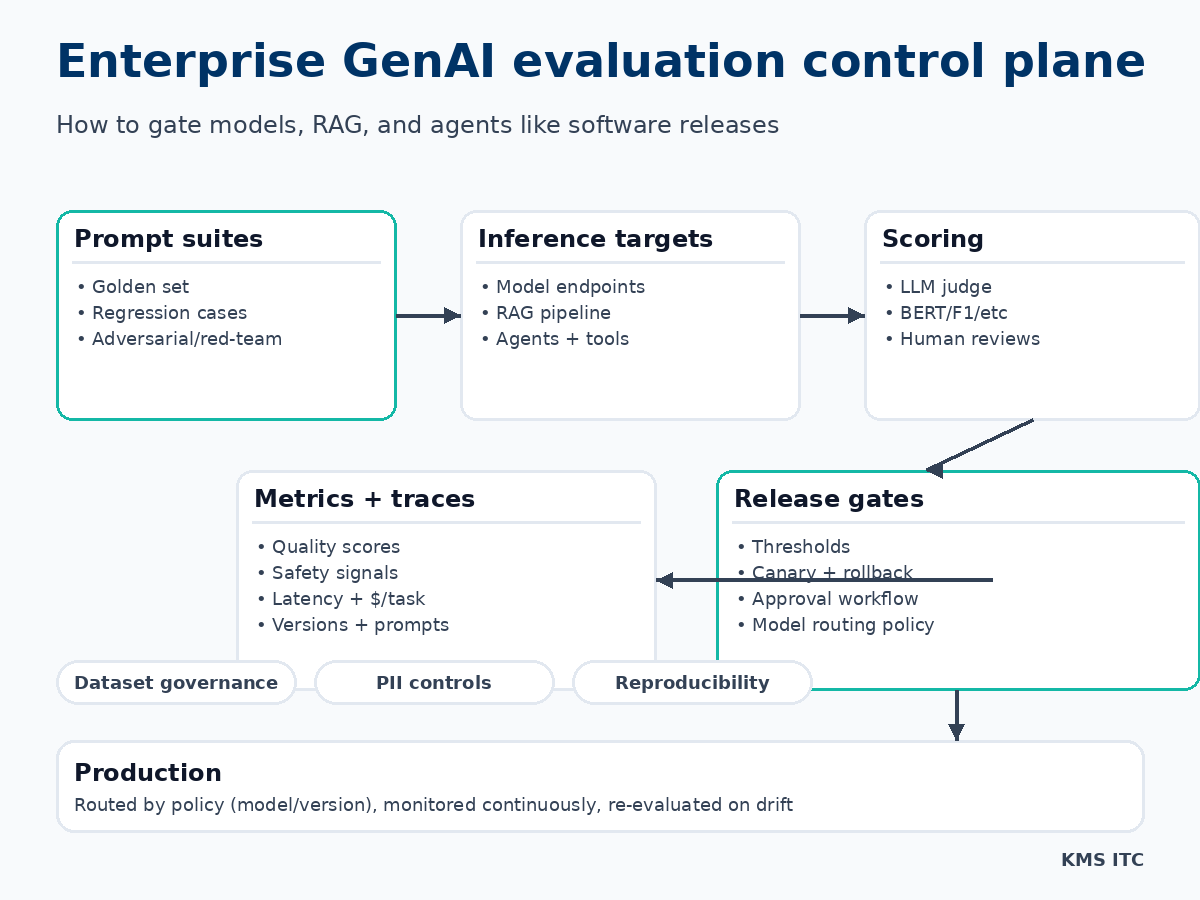

2) The architecture implication: you need an evaluation control plane

Treat evaluation as shared infrastructure, not a per-team side quest.

A practical reference architecture looks like this:

The key design move

Separate two planes:

- Application plane: your RAG apps, agents, workflows, UIs, APIs

- Control plane: evaluation suites, scoring, trace capture, release gates, routing policy

Once you do this, a lot of “GenAI chaos” becomes normal platform engineering:

- teams propose a change (prompt, retrieval chunking, model swap, tool policy)

- the control plane evaluates it against agreed thresholds

- releases are gated, canaried, and rolled back based on real signals

3) The tradeoffs (what will bite you if you don’t design for it)

Tradeoff A: LLM-as-judge can be wrong (and biased)

Judge models are useful, but they’re not truth.

Mitigations:

- use multiple judges for high-stakes classes (or sample + human audit)

- keep a small human-calibrated set as an anchor

- track judge drift like any other dependency

Tradeoff B: evaluation cost is real

If you score every request, you’ll pay for it.

Patterns that work:

- evaluate per release (candidate vs baseline) with a curated suite

- sample in production for drift detection, not full scoring

- tie evaluation budgets to business criticality (tier-1 workflows get more spend)

Tradeoff C: you need dataset governance (or you’ll leak PII)

Your prompt suites and traces become sensitive assets.

Minimum controls:

- classification + redaction (PII/PHI)

- data residency rules

- retention policy

- access controls and audit logs

Tradeoff D: “quality” is multi-dimensional

If you optimise only for correctness, you may regress latency or cost.

A workable scorecard normally includes:

- task success / correctness

- faithfulness (hallucination risk) for RAG

- safety/harmfulness constraints

- latency (p50/p95)

- $/task and token usage

4) What to standardise in an enterprise operating model

(1) A shared scorecard

Define 6–10 metrics your org will actually gate on.

Example gate policy:

- block release if correctness drops >2% on golden set

- block if harmfulness rises above threshold

- block if p95 latency or $/task increases beyond budget

(2) A “golden set” + a red-team set

You need both:

- Golden set: representative tasks with expected outputs (or expected properties)

- Red-team set: prompt injection attempts, policy bypass, data exfil probes, edge cases

(3) Routing as policy (not hard-coded)

When evaluation exists, model selection becomes a governed decision:

- route by workload class (summarise vs extract vs reason)

- route by data class (public vs sensitive)

- route by cost/latency SLO

(4) Release gates integrated into CI/CD

Make evaluation a step like tests:

- candidate evaluated vs baseline

- publish a scorecard artefact

- require approval for tier-1 workflows

- canary in production with rollback triggers

5) A practical “start this week” checklist

If you want this to be real (not theatre):

- Pick one workflow that matters (customer email drafting, ticket triage, knowledge assistant).

- Create 30–80 prompts (golden + red-team).

- Define a scorecard with thresholds for:

- correctness/success

- safety

- p95 latency

- $/task

- Run a baseline and store the results.

- Add a release gate: “no deploy without scorecard delta.”

The goal isn’t perfection. It’s turning GenAI change from “ship and pray” into measurable iteration.

Sources

If you’re rolling out GenAI across multiple teams and want a lightweight evaluation + release governance model (scorecard, gates, routing policy, and reference architecture), reach out via /contact and we’ll help you stand it up.