Scaling Postgres without sharding: the real control plane is reliability engineering

Why a single-writer Postgres architecture can go further than you think—if you treat caching, pooling, rate limits, and blast-radius isolation as first-class design primitives.

KMS ITC

Most teams hit the same fork in the road:

- Postgres is getting hot.

- Incidents are clustering around the database.

- Somebody says “we need to shard.”

Sharding can be the right move.

But it’s also one of the most expensive architectural programs you can start—because it forces application changes everywhere: data access patterns, transactions, query assumptions, and operational tooling.

A better question to ask first is:

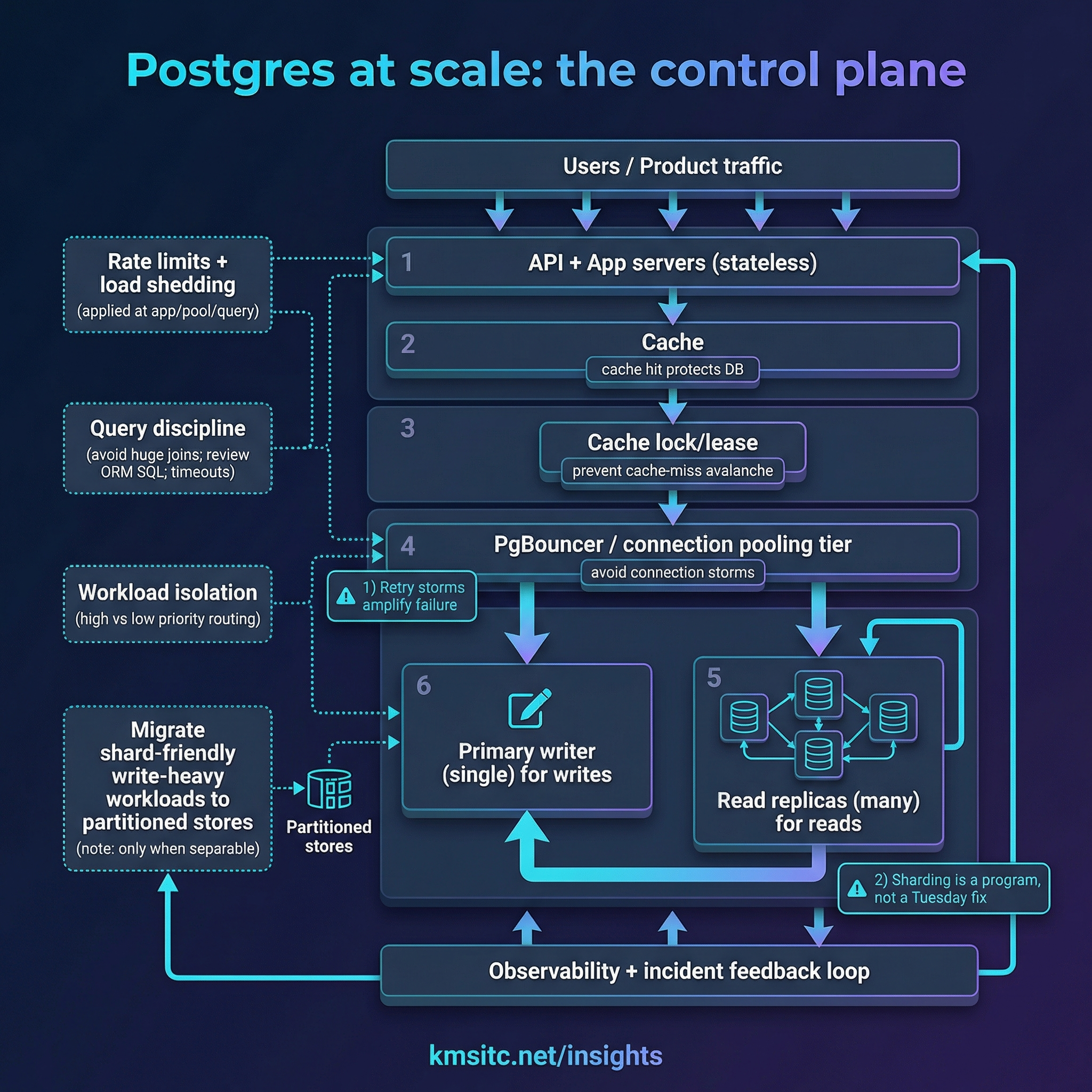

Have we built the reliability control plane that prevents a read-heavy system from collapsing into the primary writer?

A ByteByteGo write-up based on publicly shared details from the OpenAI engineering team is a useful case study here: OpenAI reportedly pushed a single-writer Postgres design (with read replicas) to extreme scale by treating reliability as a system problem, not a “bigger database” problem.

1) The architecture choice: single writer + read replicas

A single-primary architecture is simple:

- one primary handles all writes

- read replicas handle most reads

This creates an obvious bottleneck: writes can’t be horizontally distributed.

So why keep it?

Because for many product workloads:

- reads dominate

- the most common failure mode is not “the database can’t read” but “something upstream dumped too much load onto the writer”

- sharding isn’t a tweak; it’s a multi-month program with cross-cutting change

The pragmatic approach is: push the current architecture as far as you can safely, and treat sharding as a future lever when the workload truly demands it.

2) The real enemy: cascading failure loops

At scale, many database incidents are not “Postgres is slow.”

They’re feedback loops:

- cache hit rate drops

- a surge of cache misses hits the database

- latency rises, timeouts begin

- clients retry

- retries amplify load → even more timeouts

This is how a read-heavy system can still melt a database.

Two control-plane primitives matter here:

- cache lock / lease: when many requests miss the same key, only one request repopulates it; the rest wait

- rate limits + load shedding: stop uncontrolled surges before they become retry storms

3) Connection storms: fix them at the protocol boundary

A classic scaling cliff is connections.

Even if your database has CPU headroom, a spike in client connections can degrade performance or exhaust limits.

The standard pattern is to introduce a connection pooling tier (commonly PgBouncer) and treat it like production infrastructure:

- run it as a first-class tier

- scale it with replicas

- keep it close to the database region to avoid extra latency

Connection pooling doesn’t make queries cheaper.

It makes your system survivable under spiky traffic.

4) Query discipline: prevent “one query takes down the world”

At scale, the most dangerous queries are often:

- complex joins in OLTP hot paths

- ORM-generated SQL no one ever read

- long-lived transactions that block maintenance (vacuum/autovacuum)

The pattern we like is:

- measure and rank “top CPU queries”

- set timeouts aggressively (and fail fast)

- redesign hot-path queries for predictable cost

- move heavy joins off the critical path (sometimes into the application layer or precomputed views)

In other words: make expensive behavior impossible, not merely discouraged.

5) Workload isolation: avoid the noisy neighbor effect

A scaling reality is that not all traffic is equal.

Some traffic is:

- revenue-critical

- security-critical

- latency-sensitive

Other traffic is “nice to have.”

If a new feature introduces a bad query pattern, the correct response is not “hope.”

It’s isolation:

- split low-priority and high-priority workloads

- route them to different pools/instances where needed

- preserve SLOs for the critical path

This turns “one feature launch” from a platform-wide incident into a bounded event.

6) “Move write-heavy workloads elsewhere” does not mean “spray data across databases”

The phrase that confuses most architects is:

“Migrate write-heavy, shard-friendly workloads to a partitioned store (e.g., Cosmos DB).”

This only works when the workload is logically separable:

- it can be partitioned by a key (tenant/user/conversation)

- it does not require multi-table joins with your core relational truth

- it does not require cross-database transactions for correctness

Examples that are often separable:

- event logs, telemetry, audit trails

- chat/session message history

- counters/quotas/rate-limit state

- background job state

The EA takeaway: you’re not “abandoning Postgres.”

You’re protecting it by keeping the primary writer focused on the data that must be strongly consistent and relational.

7) MVCC reality: write amplification is a tax you must design around

Postgres MVCC is great for concurrency.

But at high write volume it introduces:

- row version churn (write amplification)

- dead tuples and bloat (read amplification)

- more vacuum/autovacuum pressure

The clean strategy is the boring one:

- reduce unnecessary writes

- keep schema changes safe

- move the “write firehose” into stores that were built for it

8) A decision checklist (before you shard)

Before starting a sharding program, ask:

- Do we have cache locking/lease to prevent cache-miss avalanches?

- Do we have rate limits and load shedding at app + query boundaries?

- Are retries controlled (backoff, jitter, caps) to prevent retry storms?

- Do we have a pooling tier (PgBouncer) sized for spikes?

- Can we isolate low-priority workloads from the critical path?

- Have we eliminated top CPU queries and ORM surprises?

If the answer to most of these is “no,” sharding will not save you.

It will simply move the incident surface area.

Closing: treat sharding as a program, and reliability as the control plane

At enterprise scale, “database scaling” is rarely solved by one trick.

The teams that win build a control plane that enforces:

- predictable load

- bounded failure

- fast recovery

Then—only when the workload truly demands it—they shard with intent.

Sources

If you’re trying to scale Postgres under rapid product growth and want a pragmatic path (pooling, caching controls, rate limits, workload isolation, and a sharding decision framework), reach out via /contact and we’ll help you design the control plane first.