Enterprise Architecture

AI Gateway as Control Plane: the enterprise pattern for Bedrock at scale

The biggest Bedrock architecture decision is not model choice. It is whether you run direct model access or establish an AI gateway control plane for policy, reliability, and cost governance.

Most enterprise AI programs stall at the same point: teams prove model quality, then hit operational chaos.

- inconsistent guardrails,

- duplicated auth logic,

- no reliable chargeback,

- unclear blast radius when a model endpoint degrades.

The most useful architectural signal from AWS lately is the AI gateway pattern for Amazon Bedrock. Not because gateways are new, but because it reframes GenAI delivery from “model calls in app code” to platform policy in one control plane.

What changed (and why it matters)

The AWS reference pattern puts Amazon API Gateway in front of Bedrock to centralize:

- Identity and authorization controls

- Traffic management and quota policies

- Standardized request/response mediation

- Operational telemetry for reliability and cost

For CTOs and enterprise architects, this is the core shift: move GenAI governance from app-by-app implementation to platform-enforced policy.

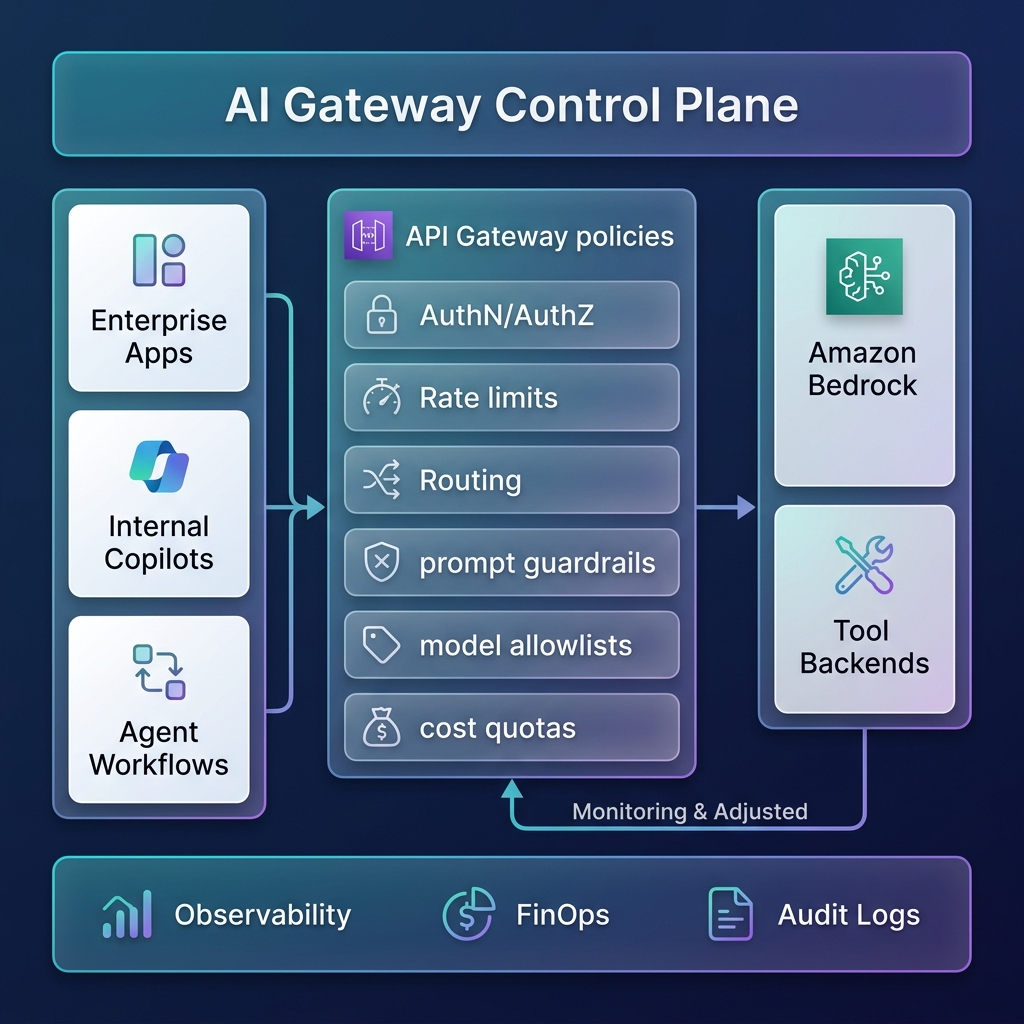

Reference architecture: AI gateway control plane

A practical enterprise shape is a 4-layer model:

- Consumer layer: copilots, internal assistants, agent workflows, integrations.

- Policy layer (gateway): authentication, model allowlists, prompt/content controls, rate limits, budget limits.

- Inference layer: Bedrock models (different latency/cost profiles by workload class).

- Operations layer: tracing, SLO dashboards, token/cost analytics, audit evidence.

This gives architecture teams one place to define non-functional behavior: reliability, risk, and spend.

Architectural implications

1) Operating model: product teams consume capabilities, platform teams own controls

Without a gateway, every product team re-implements the same controls differently. With a gateway, the platform team can publish policy-backed AI interfaces as a product.

2) Governance: model choice becomes a governed decision

Allowlist and routing policies let you enforce which models are valid for which data class, workload type, or business domain.

3) Reliability: controlled degradation instead of random failure

A gateway lets you predefine fallback behavior (model downgrade, lower max output, queue shaping, circuit breakers) rather than inventing incident-time fixes.

4) FinOps: budget control becomes real-time, not month-end

Token and request telemetry at the control plane enables spend guardrails and showback by team, app, and workload lane.

30/60/90-day plan

30 days

- inventory Bedrock-consuming apps and agent flows;

- define 3 workload lanes (interactive, standard, batch/eval);

- establish minimum telemetry schema (tenant, app, workload class, model, token usage).

60 days

- deploy a shared AI gateway baseline;

- enforce model allowlists and per-lane rate/timeout policies;

- add centralized audit logs and quota controls.

90 days

- implement fallback policies and saturation playbooks;

- publish self-service onboarding for internal teams;

- run monthly architecture review on SLO/cost/policy drift.

Tradeoffs you should decide explicitly

- Central control vs team autonomy: stronger standards, slower edge-case experimentation unless governance is well-designed.

- Faster scaling vs platform dependency: more consistent delivery, but tighter coupling to your gateway and cloud-native primitives.

- Better cost visibility vs observability complexity: clear chargeback requires disciplined tagging and telemetry hygiene.

The takeaway

For enterprise GenAI, the winning pattern is not “pick the best model.”

It is: establish an AI gateway control plane that makes policy, reliability, and cost first-class architecture decisions.

Teams that do this early scale safer and faster than teams that keep embedding model calls directly into every application.

Sources

- AWS Architecture: AI gateway for Bedrock

- AWS Architecture: conversational observability

- AWS Well-Architected GenAI Lens update

If you want, I can share a one-page AI Gateway policy starter pack (model allowlist template, lane defaults, and fallback policy matrix).

Comment “GATEWAY-PACK” with your primary cloud and whether your top use case is copilot, RAG, or autonomous agents.